A Complete 2026 Guide for Modern Cloud Teams

Kubernetes has become the de facto standard for container orchestration, powering everything from start-ups experimenting with microservices to large enterprises running global-scale workloads. But as adoption accelerates, so does a problem that many engineering and finance leaders didn’t anticipate: runaway Kubernetes costs.

This complete guide draws from real-world implementations to share battle-tested Kubernetes cost optimization strategies that slash spend by 40–70% while preserving (or even improving) performance and reliability. We’ll also show how platforms like CloudMonitor automate visibility, recommendations, and enforcement for sustainable results.

Why Kubernetes Costs Still Spiral in 2026?

Even mature teams struggle because Kubernetes hides complexity behind powerful abstractions. Common culprits include:

- No clear view of per-service, namespace, or team-level costs

- Requests far exceeding actual usage (the #1 waste driver)

- Forgotten “zombie” workloads, orphaned volumes, and unused load balancers

- Over-provisioned or poorly binned nodes

- Aggressive or misconfigured autoscaling

- Hidden networking, storage, data egress, and observability fees

- Poor labeling breaking accurate attribution

Recent industry data shows over 68% of organizations overspend on Kubernetes by 20–40%+, often due to misconfigurations and lack of ongoing governance. In 2026, with AI workloads and larger clusters, these gaps are even more expensive.

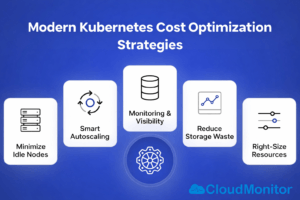

Top Kubernetes Cost Optimization Strategies for 2026

Here are the most impactful, field-proven techniques used by leading cloud-native teams today.

Rightsize Requests and Limits – Still the #1 Savings Lever Scheduling relies on requested resources, not actual consumption. Audits in 2026 routinely find 80–90% of pods with inflated CPU/memory requests “just in case.”

Fix it:

- Analyze historical pod usage with tools like CloudMonitor

- Set requests to P95–P99 patterns (add small headroom)

- Enforce via LimitRanges, ResourceQuotas, and automated guardrails

- Leverage Vertical Pod Autoscaler (VPA) for ongoing adjustments

Pro tip: Even 5–15% per-pod reductions compound to 30–60% cluster savings through better bin-packing.

Master Autoscaling – Configure for Efficiency, Not Just Speed Cluster Autoscaler, HPA, VPA, Karpenter, and KEDA are powerful—but only when tuned aggressively for scale-down.

2026 best practices:

- Set low scale-down thresholds (e.g., 5–10 min idle)

- Combine HPA + VPA for pod-level intelligence

- Adopt event-driven scaling (KEDA) for bursty workloads

- Use Karpenter for faster, smarter node provisioning

Misconfiguration still causes nodes to linger empty—proper setup prevents this.

Leverage Spot & Discounted Instances Aggressively Spot instances (or preemptible/spot equivalents) deliver 70–90% savings and remain a top lever in 2026, especially with better interruption handling.

Ideal for: Batch, CI/CD, ML training, stateless services, event-driven jobs Avoid for: Stateful apps, latency-critical workloads

Modern tools blend spot + on-demand with automatic fallbacks and checkpointing.

Eliminate Orphaned & Zombie Resources Clusters accumulate waste: detached PVs, unused LBs, CrashLoopBackOff pods, forgotten snapshots, idle node groups.

Solution: Automate monthly (or real-time) cleanups via policies, scripts, or platforms like CloudMonitor that flag and suggest removals.

Optimize Node Selection & Bin-Packing Wrong instance types double costs even with perfect pod sizing.

2026 tactics:

- Prefer compute-optimized, Graviton/ARM (up to 40% cheaper)

- Use GPUs only when required

- Maximize bin-packing density to run fewer nodes

- Separate node pools by workload class (e.g., CPU vs. memory heavy)

Build True Cost Visibility & Chargeback Accountability drives lasting change. Enforce granular labeling:

app: frontend team: product env: prod cost-center: FIN-2026-01 owner: devopsThis unlocks per-team, per-app, per-namespace reporting, trends, and alerts.

CloudMonitor delivers automated allocation and chargeback reports across EKS, AKS, and GKEAccount for Non-Compute Costs Often overlooked: load balancer fees, PV storage, egress, secrets managers, logging/monitoring add-ons.

Audit these separately—many teams find they exceed compute waste.Adopt a Dedicated Kubernetes Cost Platform (Like CloudMonitor) Manual efforts don’t scale in 2026. CloudMonitor provides:

- Pod- and namespace-level cost breakdown

- AI-powered rightsizing & node recommendations

- Spend forecasting and anomaly alerts

- Automated cleanup suggestions

- Multi-cloud visibility (EKS/AKS/GKE)

- Team-specific dashboards

Shift from reactive firefighting to proactive, continuous Kubernetes cost optimization.

Conclusion: Make Kubernetes Cost Optimization a Continuous Discipline in 2026

Kubernetes delivers unmatched agility and scale—but without disciplined controls, it becomes one of your largest cloud expenses.

By prioritizing rightsizing, intelligent autoscaling, spot usage, resource cleanup, node efficiency, and full visibility, forward-thinking teams consistently achieve 40–70% reductions.

In 2026, top performers treat Kubernetes cost optimization as ongoing engineering practice, powered by automation and data-driven platforms like CloudMonitor. This empowers engineering, FinOps, and leadership to align innovation with financial discipline—turning Kubernetes from a cost center into a strategic advantage.

Start with an audit today. The savings (and peace of mind) are waiting

Take Control of Your Kubernetes Costs Today

Start optimising your container and Kubernetes costs with CloudMonitor.ai.

Book a demo or explore dashboards to see how real-time cost monitoring can transform your cloud financial management.

- Observability for AI-Powered Cloud - April 24, 2026

- FinOps Framework 2026 Explained for Azure Users - April 17, 2026

- Azure Cost Controls in CI/CD Pipelines: Shift-Left FinOps for Azure - April 8, 2026