Why Traditional FinOps Isn’t Enough in 2026?

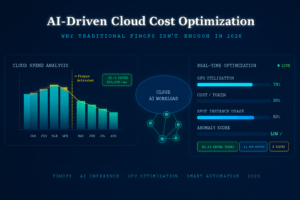

AI-Driven Cloud Cost Optimization is becoming essential for modern enterprises as cloud spending continues to grow rapidly in 2026. Traditional FinOps practices that once helped control cloud costs are no longer enough for AI-powered workloads, GPU infrastructure, and dynamic cloud environments.

Cloud bills are changing. In 2026 the driver of runaway cloud spend is no longer just idle VMs and forgotten snapshots – it’s AI: large models, always-on inference, bursty training jobs, and complex hybrid architectures that mix serverless, containers, and specialized accelerator fleets. If your FinOps practice looks the same as it did in 2020–2022, it’s already behind. This post explains why, shows what AI-driven FinOps looks like, and gives a practical roadmap you can start using today.

Key players to watch

- AWS

- Google Cloud

- Microsoft Azure

- CloudZero

- CAST AI

- FinOps Foundation

The hard facts (short)

A majority of FinOps teams are now directly managing AI spending – that’s a rapid shift from previous years and changes both tooling and governance needs. (data.finops.org)

Inference (model-serving) costs compound with usage: every user interaction can multiply spend, so model efficiency and placement decisions directly affect margin. (CloudZero)

Vendors and cloud-native platforms report that AI-aware automation can drive large, repeatable savings – many quotes range from mid-20s to 60% in targeted workloads (K8s rightsizing, spot/interruptible usage, workload placement). Real results vary by workload. (Cast AI)

Why traditional FinOps falls short for AI workloads?

Predictability is gone. Traditional FinOps relies heavily on forecasting and procurement (reservations, savings plans) based on stable, seasonal usage patterns. AI workloads – especially inference for large language models (LLMs) – are highly variable (requests per minute, model choice, output length) and can spike unpredictably. The old monthly-bucket forecasting model fails to capture those dynamics. (CloudZero)

Granularity and telemetry gaps. Cost signals from GPUs, TPU reservations, model API calls, and third-party LLM services are different from simple CPU/RAM metrics. Meaningful optimization needs per-model, per-endpoint telemetry tied to business metrics (revenue per request, latency SLA, etc.), not just cloud line items. (finops.org)

Speed of optimization. Manual rightsizing reviews and quarterly reserved instance decisions are too slow when model choice or serving topology can double costs overnight. You need continuous, automated decision-making in close to real time. (Cast AI)

New cost levers and tradeoffs. For AI you trade off model accuracy vs latency vs cost vs carbon. Those are multi-dimensional decisions best handled with modeling, ML-driven prediction, and automated policies – not spreadsheets and Slack threads.

What “AI-driven FinOps” actually does? (the core capabilities)

Telemetry → semantic cost mapping. Instrument model pipelines so each token, request, GPU hour, and storage object maps to a product metric (customer, feature, experiment). This makes cost actionable as product telemetry, not just a bill line.

Predictive spend and anomaly detection. ML models forecast near-term inference and training spend at high cadence and flag anomalies (sudden model drift causing more compute, runaway retries). This reduces surprise bills. (data.finops.org)

Automated workload placement & accelerator selection. Use optimization agents to select spot/interruptible capacity vs reserved vs on-demand, and pick cheaper instance families (including newer efficient CPUs/ARM/Graviton or next-gen GPUs) while respecting latency/SLAs. (Cast AI)

Adaptive instance sizing & autoscaling tuned to model behavior. Instead of naive CPU/RAM autoscaling, scale based on model queue length, token throughput, and cost-per-token objectives.

Cost-aware model orchestration. Automatic model selection per request (smaller model for low-value requests, powerful model for premium flows) and conditional prompt engineering (e.g., reduce output length, summarize prior context) to control token-related costs. (IntuitionLabs)

Closed-loop automation with human oversight. Automated suggestions are simulated and validated (A/B tests, small traffic slices) before roll-out; humans approve high-impact changes via policy guards.

Example – simple ROI snapshot (realistic illustration)

Imagine monthly cloud spend: ₹500,000 (INR) – for clarity, convert the logic to absolute numbers; reducing this by 20% saves ₹100,000 per month.

Calculation: 500,000 × 0.20 = 100,000.

Now imagine your AI-driven toolkit (predictive rightsizing + spot usage + model routing) recovers 20–40% on AI-heavy workloads; that ROI often covers tooling + ppl costs inside a few months. Vendor case studies report even larger wins in focused areas like Kubernetes cost optimizations. (Cast AI)

A practical 8-step roadmap to adopt AI-driven FinOps

Instrument everything now. Tag compute/GPU, model, endpoint, data set, and customer. If it isn’t tagged, you can’t optimize it.

Build per-model cost metrics. Cost per token, cost per inference, cost per training epoch tied to business KPIs.

Deploy forecasting + anomaly models. Start with short-horizon (minutes → hours → days) forecasting for inference traffic and cost. (data.finops.org)

Automate low-risk actions. Auto-scale, schedule batch training to low-price windows, and use spot instances for noncritical work. Validate these via canary deployments. (Cast AI)

Add policy layer (guardrails). Approvals for expensive experiments, enforced budgets per team, and circuit breakers for runaway jobs.

Integrate FinOps with MLOps and SRE. Create joint runbooks and shared dashboards – finance, product, data science must be co-owners. (finops.org)

Model-aware purchasing. Use mixed buying strategies (reservations for steady baseline + autoscaling + spot for bursts). Measure marginal cost of every model change.

Continuous learning loop. Treat cost optimization like a product: iterate, measure, and ship improvements.

Governance, risks, and what to watch out for

Opaque recommendations. AI agents can suggest optimizations that are correct on cost but harm UX or ML quality. Always validate with experiments.

Over-optimization of latency-sensitive flows. Don’t route premium users to cheaper but slower endpoints without explicit policy.

Vendor lock-in and explainability. AI tools that “magically” move workloads across clouds can introduce contractual or data-locality risks – keep human review for cross-cloud migrations.

Security & model drift. Cost anomalies may indicate abuse or model drift causing higher token usage; link anomaly detection with security ops. (wjaets.com)

Tools and patterns that matter in 2026

Kubernetes cost automation and cluster-level optimizers (for ephemeral AI workloads). (Cast AI)

Cost platforms that understand inference economics (token-aware billing, per-model attribution). (CloudZero)

LLM API monitoring and model-routing layers to pick the best engine per request. (IntuitionLabs)

FinOps practices updated with AI playbooks – treat AI spend as its own product line within FinOps. (data.finops.org)

Quick checklist: First 30 days

Start tagging (compute, model, endpoint, team).

Ship one dashboard: cost per model & cost per request.

Run a 2-week anomaly detection pilot on inference costs.

Automate one low-risk saving (e.g., scheduled batch jobs to cheaper windows or replace on-demand with spot for a single job).

Hold a 1-hour cross-functional “AI cost playbook” session (Finance + ML + SRE).

Closing – the mindset shift

Traditional FinOps reduced waste in a world of predictable VMs and reserve/commit decisions. In 2026, AI makes cloud spend dynamic, highly tied to user behavior and model design choices. The answer isn’t to hire more spreadsheet jockeys – it’s to make your FinOps practice model-aware, telemetry-rich, and automated. Combine financial governance with ML-driven automation and human guardrails, and you’ll turn AI from an unpredictable cost center into a managed, measurable part of product economics.

- FinOps Framework 2026 Explained for Azure Users - April 17, 2026

- Azure Cost Controls in CI/CD Pipelines: Shift-Left FinOps for Azure - April 8, 2026

- AI Cloud Cost Management: How Automation Is Replacing Manual Azure Reviews? - April 1, 2026